Winner, Outstanding Masters Research Award

This project was on a human following robot for fall detection which I independently carried out at the Real-time and Embedded Control, Computing, and Communication (REC3) Lab for my graduate thesis.

As a student at the Middle Tennessee State University, I was a Graduate Student Researcher in the Real-time and Embedded Control, Computing, and Communication (REC3) Lab supervised by Dr Lei Miao.

For my Master’s thesis, my research was in the interfacing of computer vision techniques with an existing Raspberry Pi Based robot prototype; FAll DEtection Robot (FADER) to carry out detection, distance estimation, one-dimensional and two-dimensional navigation, and fall detection in that order.

For this I worked with computer vision, deep-learning models, the Arduino and the Raspberry Pi; designed and built the detection system/hardware to achieve all this.

I chose to work on this project because it was a research project that tied a number of my interests together: robotics, computer vision, making, real-world relevance and applicability. Additionally, the estimates were that falls would cost as much as 67 Billion Dollars in 2020 in the US. This was a research project that could help a lot of people both physically, financially, and psychologically too.

The aim is to have such mobile robots in the homes of elderly people who live alone and to have it detect when they fall so they can get help fast.

The advantages of our approach is that it is

- Non-invasive (with respect to body)/non-participatory, i.e., the elderly person does not need to remember to wear or carry anything

- Mobile and portable

- Low-cost

- Easy to assemble

- Designed as an isolated system and so is non-invasive with respect to privacy. This allowed me to take care of concerns with computer vision while leveraging the advances in machine learning in the field.

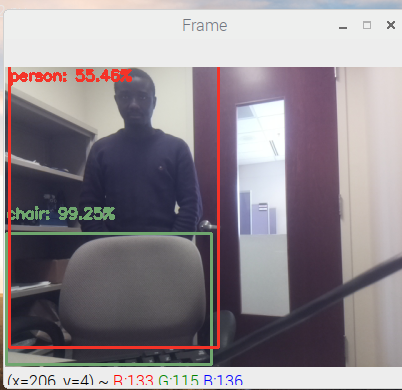

As at December 2018 (Fall 2018), I achieved detection, distance estimation and navigation in 1D space(see images and video below).

Detection: I integrated a Pi Camera with FADER. To do this, I tested the two versions of Pi Cameras- the Standard and the Pi NoIR. I chose the Pi NoIR. Next we considered as the detection approach face detection, face recognition and object detection. We chose deep learning based object detection specifically Single Shot Detection as the framework and MobileNets as the backbone architecture.

Distance Estimation: To solve the problem of distance estimation, I used linear regression to obtain a mathematical relationship between the distance of the robot from the person detected and the dimensions of the detection bounding box.

1-D Navigation: Using a threshold-based algorithm(TBA) and the distances estimated, we realized three states for 1-D navigation: approaching, waiting and retreating.

Next, we achieved navigation in 2D space, Fall Detection and tested the robot in both mildly occluded and more occluded lab spaces.

2-D Navigation: 2-D navigation was broken down into three parts namely: tracking, turning/pivoting and 1-D navigation.

2-D Navigation: Tracking + Turning/Pivoting + 1-D Navigation

We achieved both tracking and pivoting using threshold-based algorithms, the coordinates of the center of the detected person and the coordinates of the center of the camera frame. As part of 2-D navigation, I made the following modifications to the initial FADER prototype:

- Adding an Arduino Uno to work in a master-slave configuration with the Raspberrry Pi

- Restricting Motor Control from four independent wheels to two independent sides

- Encoder Use to tell distance covered by the robot.

- Separate Power Supply for the Raspberry Pi to minimize noise

- Implementing a Seeking Function in the software

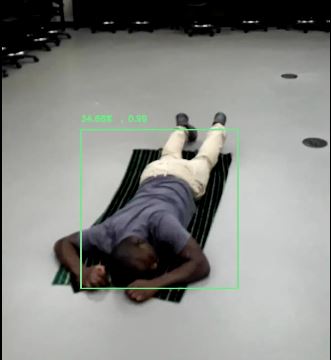

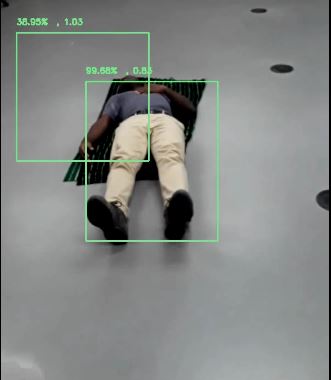

Fall Detection: I tested, with our detection software, videos from the internet showing falls, and also videos recorded in our lab spaces simulating falls in five positions- lying on the back, lying on the stomach, lying on the left side, lying on the right side, and slumping to a seating position with the back rested against the wall. Our tests provided us with two metrics for fall detection and we implemented a threshold-based algorithm in two stages to detect when a fall has occurred.

Simulated Fall Positions

Simulated Fall Positions

Testing: We carried out a preliminary test in our lab space with one person and then extended tests with six people in two spaces (mildly occluded and more occluded) in our lab. Results showed a precision of 100% and satisfactory sensitivity. The video below shows one of our tests. The video at the beginning of this page shows the view from the Raspberry Pi running on FADER.

My thesis can be found here and a recent poster presentation can be found here.